Data Pipeline

Daily Stock Data Ingestion Pipeline

A scripted workflow that scraped daily market data, joined supporting financial datasets, computed valuation-oriented features, and wrote the results back to SQL for dashboard use.

RSQLWeb ScrapingYahoo FinancePower BI Web ScrapingFeature EngineeringSQL PipelinesMarket Data

Outcome: Built a repeatable daily ingestion workflow that turned scraped market data into structured SQL tables for downstream analysis and dashboards.

Problem

Market dashboards are only as useful as the refresh pipeline behind them.

The challenge here was not simply pulling daily stock prices, but combining that data with other company and financial information so the downstream dashboards had more than just raw closing values.

Approach

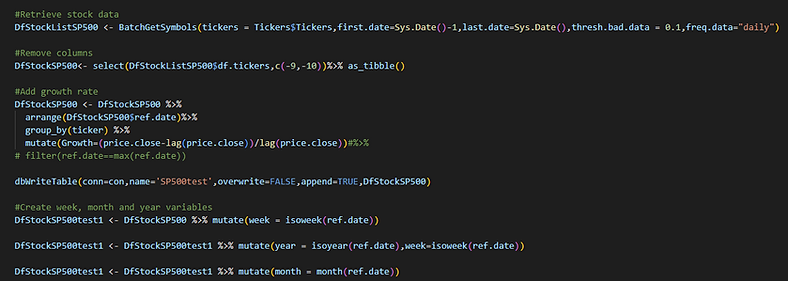

I built an R-based ingestion workflow that:

- scraped daily stock data from Yahoo Finance

- loaded supporting datasets such as company information and financial report fields

- joined the datasets into a cleaner analytical structure

- calculated additional derived features, including volatility- and valuation-oriented signals

- wrote multiple output tables back into SQL for dashboard consumption

Technical Value

This project is a strong example of recurring data engineering on a small scale.

It involved:

- scheduled extraction

- multi-source joins

- feature engineering

- structured output design for BI tools

That combination matters because a dashboard rarely becomes useful from a single source alone. Most of the value comes from how the data is prepared before anyone sees it.

Why It Mattered

Even though the subject matter was outside housing, the technical pattern is still relevant to how I work now.

It showed how to turn messy external data into a reusable analytical asset, and that same pattern carries over directly into research infrastructure, monitoring systems, and reporting workflows.